Replicate GPT-2 for $73: 600× Cheaper, 3 Hours

Train GPT-2 for $73 in 3 hours. Andrej Karpathy's nanochat achieves 600× cost reduction with modern optimizations.

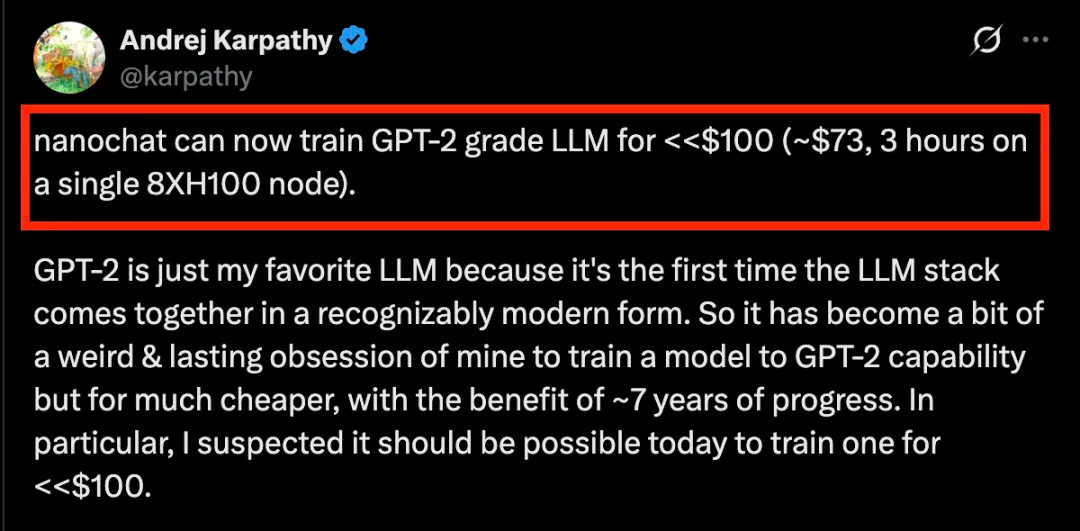

Andrej Karpathy’s personal project, nanochat, has received a major update.

Now, training a GPT-2-level LLM (large language model) costs less than $100.

Specifically, on a single 8×H100 node, it takes only 3 hours and costs about $73.

Karpathy openly states that GPT-2 is his favorite LLM because it was the first time the LLM technology stack came together in its modern form. This has turned into a kind of “strange and persistent obsession” for him: using the technological progress of the past 7 years to train a model to GPT-2’s capability level at an extremely low cost.

He had long suspected that achieving this within $100 was entirely feasible today.

And now, nanochat has made it happen.